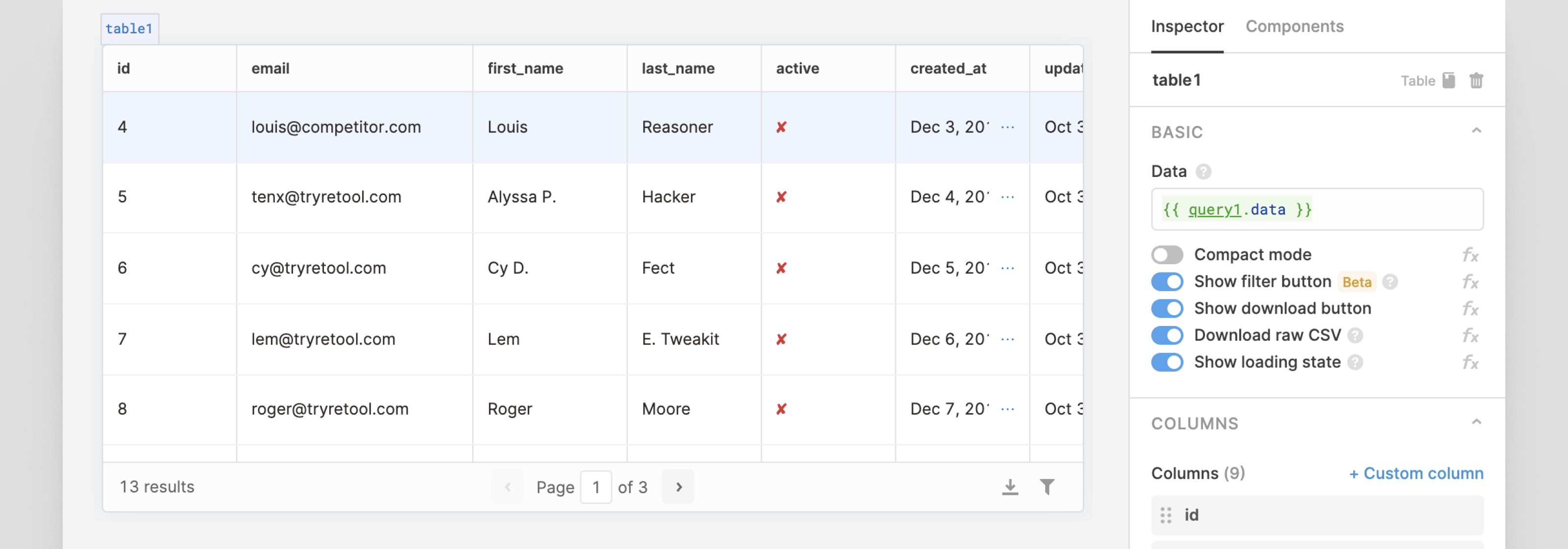

Amazon Aurora Serverless V1 has limited availability in most regions and is not configured to support production workloads that have short RTO.įor lab 6 use Postgresql 11.13 to leverage Serverless V1 and the data api this link lists the AWS regions and availability zones that support Amazon Aurora Serverless V1 from īefore you create the db we need to update the subnet group and create our db credentials as a secret. We're only using Amazon Aurora Serverless V1 here because it allows us to query the db from the console. The following section describes the networking setup we need to do manually, before we create our Amazon Aurora Serverless V1 db. Ideally in this lab we'd just use the non serverless db option and then we could either use a JDBC linked tool of choice to create the schema and load the data or even simpler just automate this work. We need to deviate from the script in lab6 to get our db setup. The benefit of using Amazon Aurora Serverless V1 is that we can enable the data api which allows us to run sql queries direct from the AWS console. In this case we're Amazon Aurora serverless V1 which allows us to access that db, create the schema and load the data then we can query it from our Amazon Redshift cluster. In lab6 we're accessing data in a PostgreSQL database. The integrations and interoperability of Amazon Redshift with other AWS services and more broadly other data sources can make data analysts very productive. This reduces the need to move and duplicate data, reducing costs, reducing unnecessary work and allowing for much faster experimentation. In this session we're using our Amazon Redshift cluster to query data that resides somewhere else. Lab 6 - Query Aurora PostgreSQL using Federation PartiQL is a SQL compatible query language that is db independent.

You might like to build your data sharing cluster (for lab 14) early so that it is ready for you to dive straight in. In lab 15 we'll work with semi structured JSON data using the Amazon Redshift SUPER data type In lab 14 we start by building a second data sharing cluster using automation. In lab 13 we're investigating materialised views and stored procedures. Lab 15 - Loading & querying semi-structured data # Session 6 - ETL/ELT - Data Sharing - Semi Structured Data You'll use the same login url with a new hash which I'll distribute in the session. That way, whatever you don't finish in session 4, you can continue in session 5. We'll use this new environment for session 4, AND 5. I've created new accounts and clusters with a clean data load as Session 5 accounts are now terminated. | Five | Federated Queries | Users | 30Sep2022 | | Four | Query Data in Amazon Redshift | Users | 29Sep2022 | | Three | Building and Managing Clusters 2of2 | Support | 28Sep2022 | | Two | Building and Managing Clusters 1of2 | Support | 28Sep2022 | | One | Intro to Amazon Redshift | All | 26Sep2022 | This is a significant body of work and not practical to squeeze into a single day.

The Redshift Immersion Labs contains more than 23 labs. Users want to run queries on data and the support folks build, manage and configure the underlying infrastructure. This aligns users and supporters with their specific areas of interest. This immersion day will be delivered in multiple half day blocks.

I'll add specific answers to questions I get during the course. It's markdown so you can save it, access it or store it anywhere. This is my list of hints and tips for this course. # Amazon Redshift Immersion Day - Tips and Tricks

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed